Results

Benchmarks across three axes: weight quantization quality (GSM8K), KV-cache serving speed (64k-context A/B), and detection accuracy (COCO128).

Weight quantization

Quality vs uniform 4-bit

OptiQ's per-layer bit allocation recovers accuracy lost to uniform 4-bit quantization. Measured on GSM8K with 200 samples per model, same prompt template, same sampling.

LLMs — GSM8K (200 samples)

| Model | Uniform 4-bit | OptiQ 4-bit | Delta |

|---|---|---|---|

| Qwen3.5-0.8B | 11.5% | 27.0% | +15.5pp |

| Qwen3.5-2B | 48.5% | 48.0% | -0.5pp |

| Qwen3.5-4B | 79.5% | 81.5% | +2.0pp |

| Qwen3.5-9B | 90.0% | 90.0% | 0.0pp |

| gemma-4-e2b-it | 5.5% | 13.0% | +7.5pp |

| gemma-4-e4b-it | 23.5% | 55.5% | +32.0pp |

Pattern: OptIQ's wins grow with how much uniform 4-bit degrades the model. On saturated benchmarks (Qwen3.5-9B at 90%), there's nothing to recover. On broken-by-uniform-quant models (gemma-4-e4b: 23.5% → 55.5%), the win is dramatic.

KV cache serving

Mixed-precision KV A/B at 64k context

Apple M3 Max (36 GB). 64,000-token English prose prompt, streaming 500 output tokens. Comparing stock

mlx_lm.server (fp16 KV) vs optiq serve --kv-config (per-layer sensitivity-guided KV quantization).Decode tok/s at 64k

| Model | fp16 TTFT | fp16 decode | Mixed TTFT | Mixed decode | Decode speedup |

|---|---|---|---|---|---|

| Qwen3.5-0.8B | 34.5s | 47.2 tok/s | 40.5s | 42.4 tok/s | -10% |

| Qwen3.5-2B | 82.8s | 27.9 tok/s | 161.7s | 41.8 tok/s | +50% |

| Qwen3.5-4B | 165.8s | 8.1 tok/s | 252.0s | 13.1 tok/s | +62% |

| Qwen3.5-9B | 214.8s | 20.7 tok/s | 163.8s | 27.1 tok/s | +31% |

Takeaway: For Qwen3.5 2B and larger, mixed-precision KV gives a 31–62% decode speedup at 64k context. 0.8B is too small to benefit —

optiq kv-cache's sensitivity pass correctly picks uniform 4-bit for it (no layer needs 8-bit protection), but on M3 Max even that is marginally slower than fp16 at this scale.

Per-layer KV configs (generated by

optiq kv-cache --target-bits 4.5)| Model | Full-attn layers | Config | Avg bits |

|---|---|---|---|

| Qwen3.5-0.8B | 6 of 24 | 6 @ 4-bit | 4.00 |

| Qwen3.5-2B | 6 of 24 | 6 @ 4-bit | 4.00 |

| Qwen3.5-4B | 8 of 32 | 7 @ 4-bit + 1 @ 8-bit (layer 3) | 4.50 |

| Qwen3.5-9B | 8 of 32 | 7 @ 4-bit + 1 @ 8-bit (layer 3) | 4.50 |

Why layer 3? In Qwen3.5's hybrid architecture, layer 3 is the first full-attention layer (layers 0, 1, 2 are linear-attention). It's consistently the most KV-sensitive layer across both 4B and 9B — and protecting it at 8-bit also happens to flip it onto

mx.quantized_matmul's fast path on Apple Silicon. Quality-preservation and speed happen to point the same direction.

TurboQuant research

Rotated-space KV attention

The research path: rotation-based vector quantization that preserves inner products for attention. Compared against mlx-lm's affine

QuantizedKVCache.

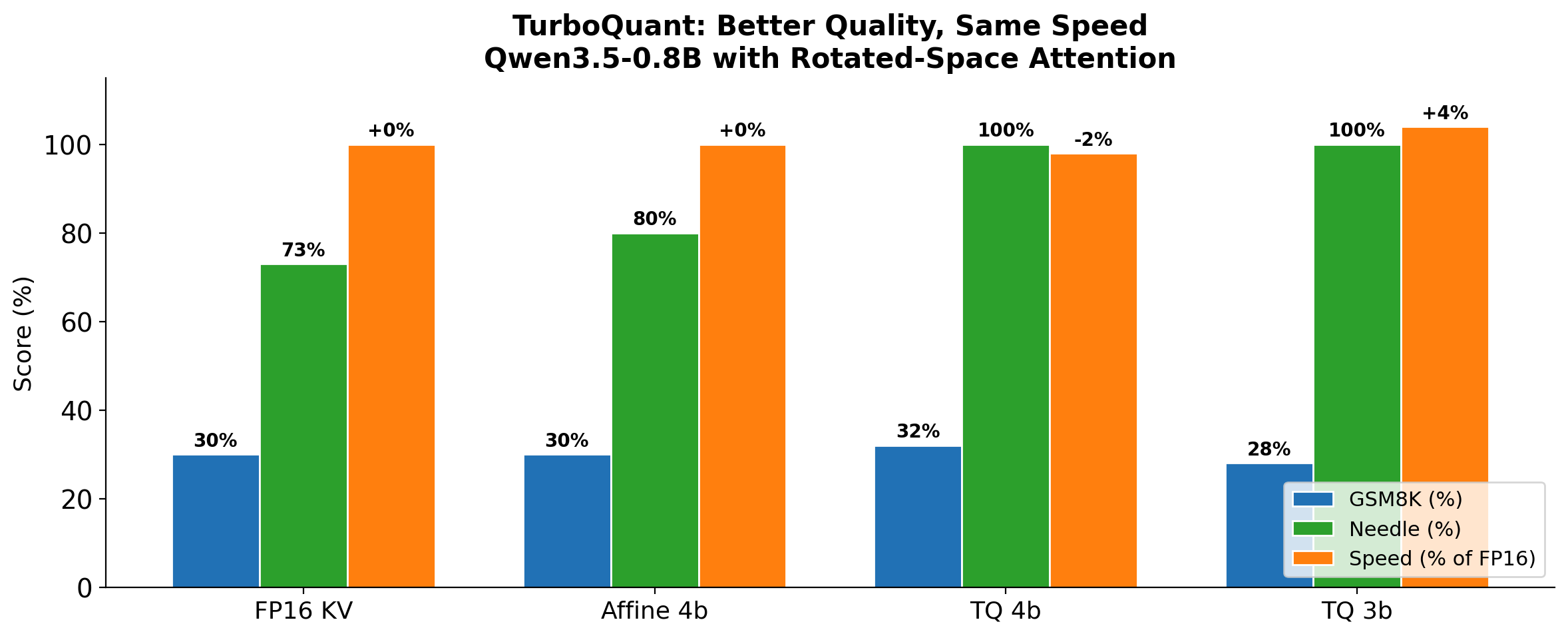

Quality + speed

TurboQuant 4-bit: better reasoning (32% vs 30%), perfect retrieval (100% vs 73%), same speed (-2%).

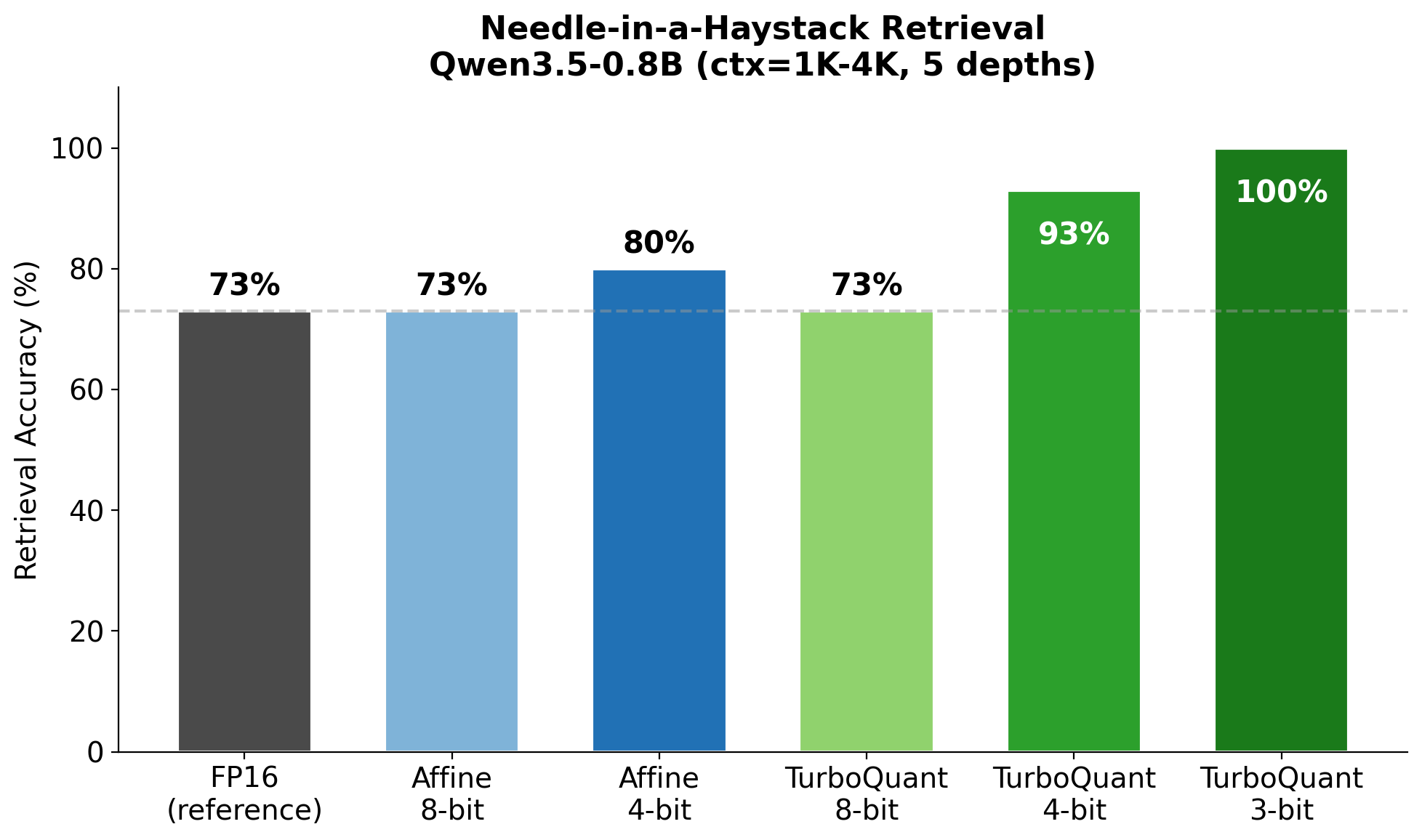

Needle retrieval

100% at 4-bit vs 73% for affine — rotated-space quantization preserves inner products across all sequence positions.

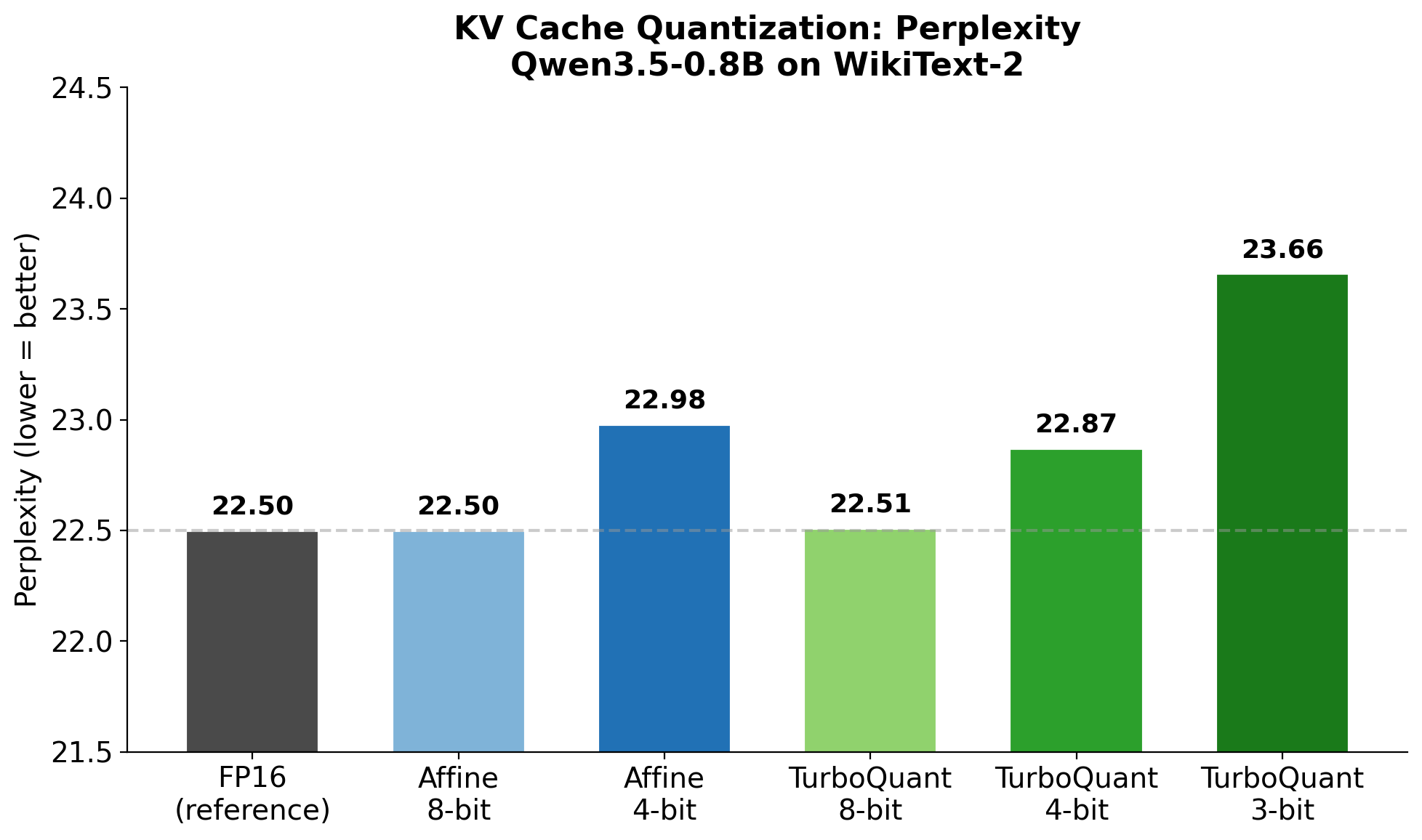

Perplexity

Tight perplexity gap at matched bit-widths — TurboQuant MSE 4-bit is PPL +0.37 vs affine's +0.48.

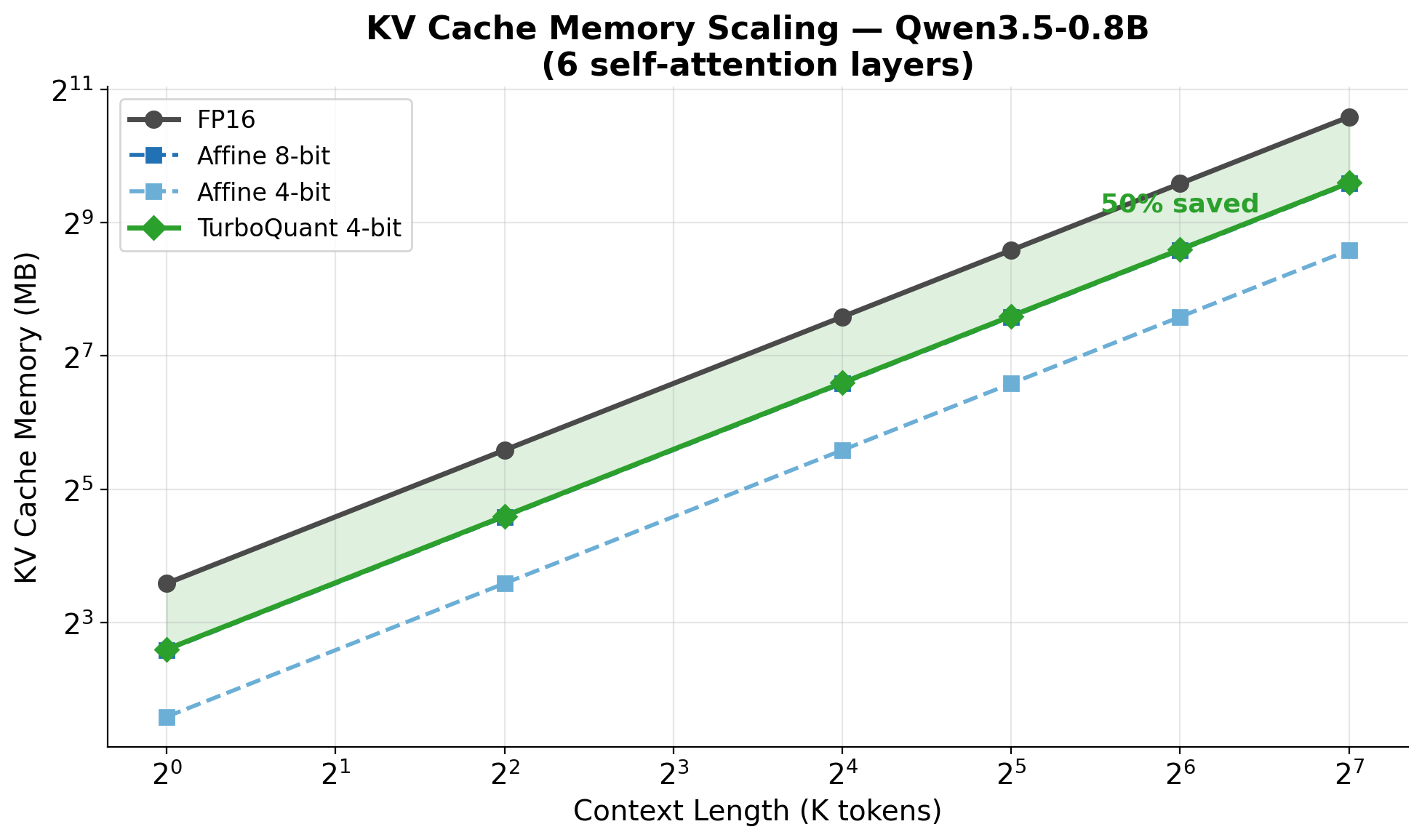

Memory scaling

Per-token KV storage as a function of sequence length — 4-bit TurboQuant is 4× smaller than fp16.

YOLO object detection

COCO128 detection deltas

OptiQ 6-bit YOLO models vs the original fp16. Quality measured on COCO128 (128 images, standard detection metric).

| Model | Original | OptiQ | Compression | Detection delta |

|---|---|---|---|---|

| YOLO26n | 9.9 MB | 2.5 MB | 3.9× | -1.6% |

| YOLO26s | 38.4 MB | 8.9 MB | 4.3× | -7.0% |

| YOLO26m | 83.8 MB | 18.9 MB | 4.4× | +0.1% |

| YOLO26l | 100.7 MB | 22.9 MB | 4.4× | 0.0% |

| YOLO26x | 225.5 MB | 50.6 MB | 4.5× | -1.1% |