Experiments

Three research threads feed OptIQ: weight sensitivity, KV-cache sensitivity, and rotated-space quantization. Each one is a separate pipeline you can run from the CLI.

Thread 1

Mixed-precision weights

Uniform quantization wastes bits. Some layers are far more sensitive than others — OptIQ measures that directly via KL divergence on calibration data, then allocates bits where they matter most.

Weight quantization pipeline

LoadHuggingFace

→

SensitivityKL divergence

→

OptimizeGreedy knapsack

→

ConvertMLX format

Run weight sensitivity + conversionbash

$ optiq convert Qwen/Qwen3.5-9B --target-bpw 4.5 --candidate-bits 4,8

OptIQ protects layers like

lm_head, embed_tokens, and the first/last attention blocks at 8-bit; the optimizer allocates the remaining bit budget by greedy knapsack on KL-reduction-per-bit. Against uniform 4-bit on Qwen3.5-0.8B, this recovers +15.5pp of GSM8K accuracy. Against uniform 4-bit on Gemma-4-e4b, +32pp.

Thread 2

Mixed-precision KV cache serving

The KV cache is a separate sensitivity problem. Layer 0's KV is often orders of magnitude more sensitive than the average layer — uniform 4-bit KV is catastrophic. Per-layer KV sensitivity is the analogue of weight sensitivity, run at serving time.

1. Measure per-layer KV sensitivitybash

$ optiq kv-cache mlx-community/Qwen3.5-9B-OptiQ-4bit \ --target-bits 4.5 \ --candidate-bits 4,8 \ -o optiq_output/kv_cache/qwen35_9b # writes optiq_output/kv_cache/qwen35_9b/kv_config.json # [{"layer_idx":3,"bits":8,"group_size":64}, ... ]

2. Serve with the mixed-precision configbash

$ optiq serve \ --kv-config optiq_output/kv_cache/qwen35_9b/kv_config.json \ --model mlx-community/Qwen3.5-9B-OptiQ-4bit \ --host 127.0.0.1 --port 8080 \ --max-tokens 32768 --temp 0.6 --top-p 0.95 --top-k 20

Why it's faster, not just smaller:

mx.quantized_matmul on Apple Silicon handles the 8-bit fast path more efficiently than the 4-bit one. Protecting a single sensitive layer at 8-bit (while the rest stay 4-bit) flips that layer's attention into the faster kernel — and because it's the KV-sensitive layer, output quality stays preserved. See Results for the 64k-context A/B across Qwen3.5 sizes.

Thread 3

TurboQuant — rotated-space KV attention

For quality-critical KV compression beyond mlx-lm's affine quantization: vector quantization on a random orthogonal rotation preserves inner products, and rotated-space attention avoids the per-key dequant cost that naive rotated quantization would incur.

TurboQuant KV cache

RotateRandom orthogonal R

→

QuantizeLloyd-Max centroids

→

AttendRotated-space SDPA

→

OutputPost-rotate result

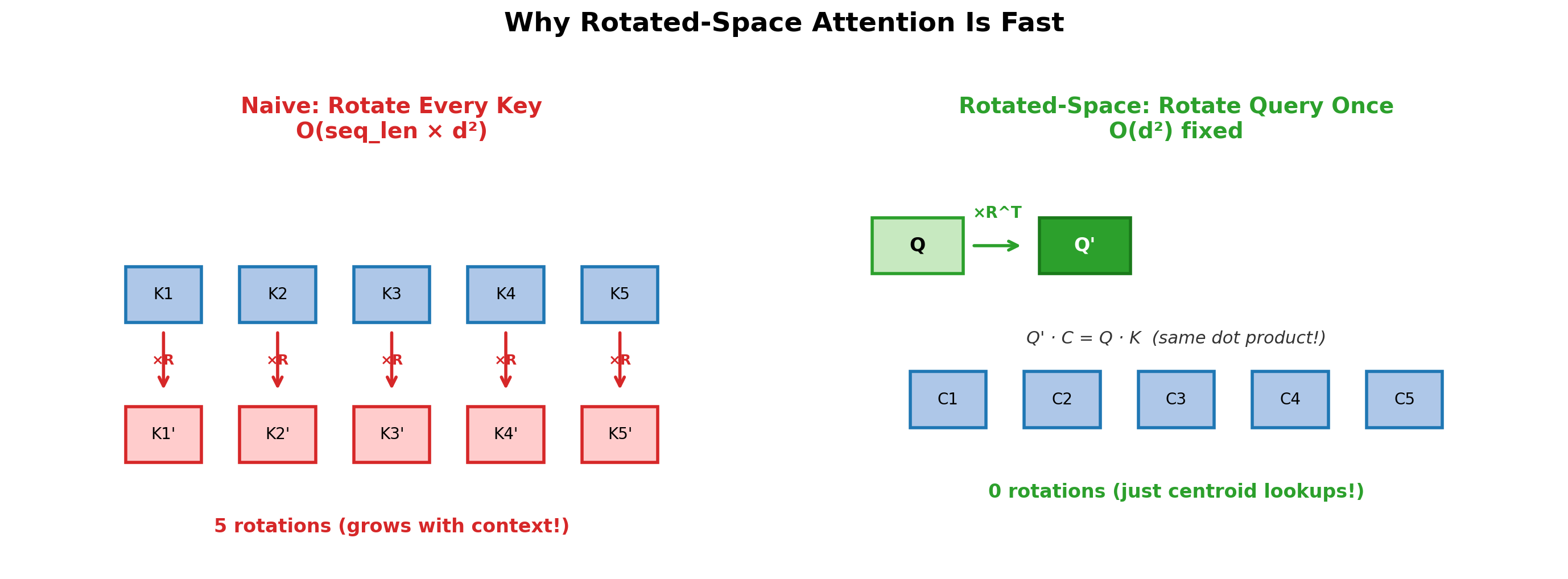

Rotated-space attention

Rotate the query once instead of every stored key. O(d²) fixed vs O(seq_len × d²) per token.

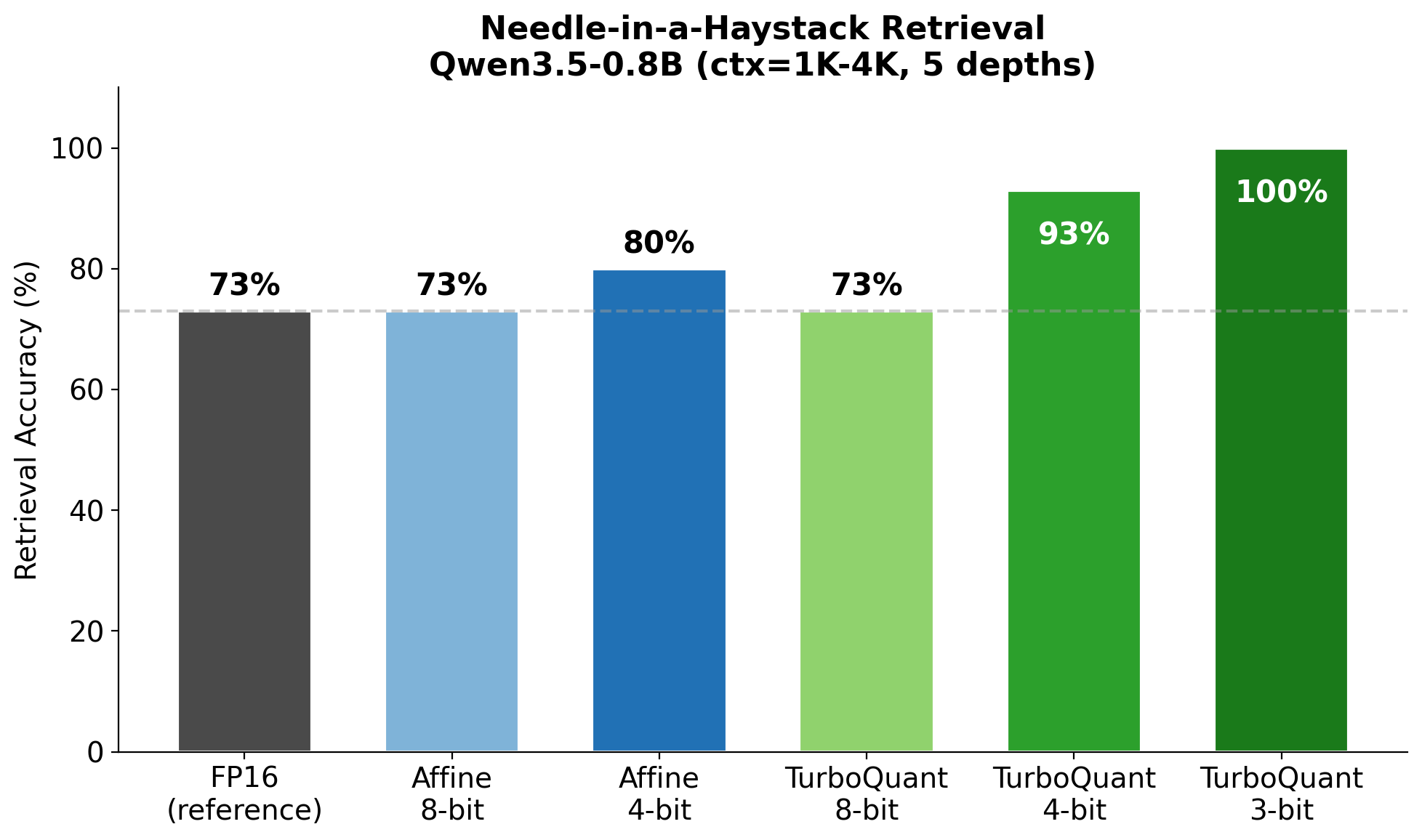

Quality comparison

100% needle retrieval at 4-bit TurboQuant vs 73% for affine — inner products are preserved in rotated space.

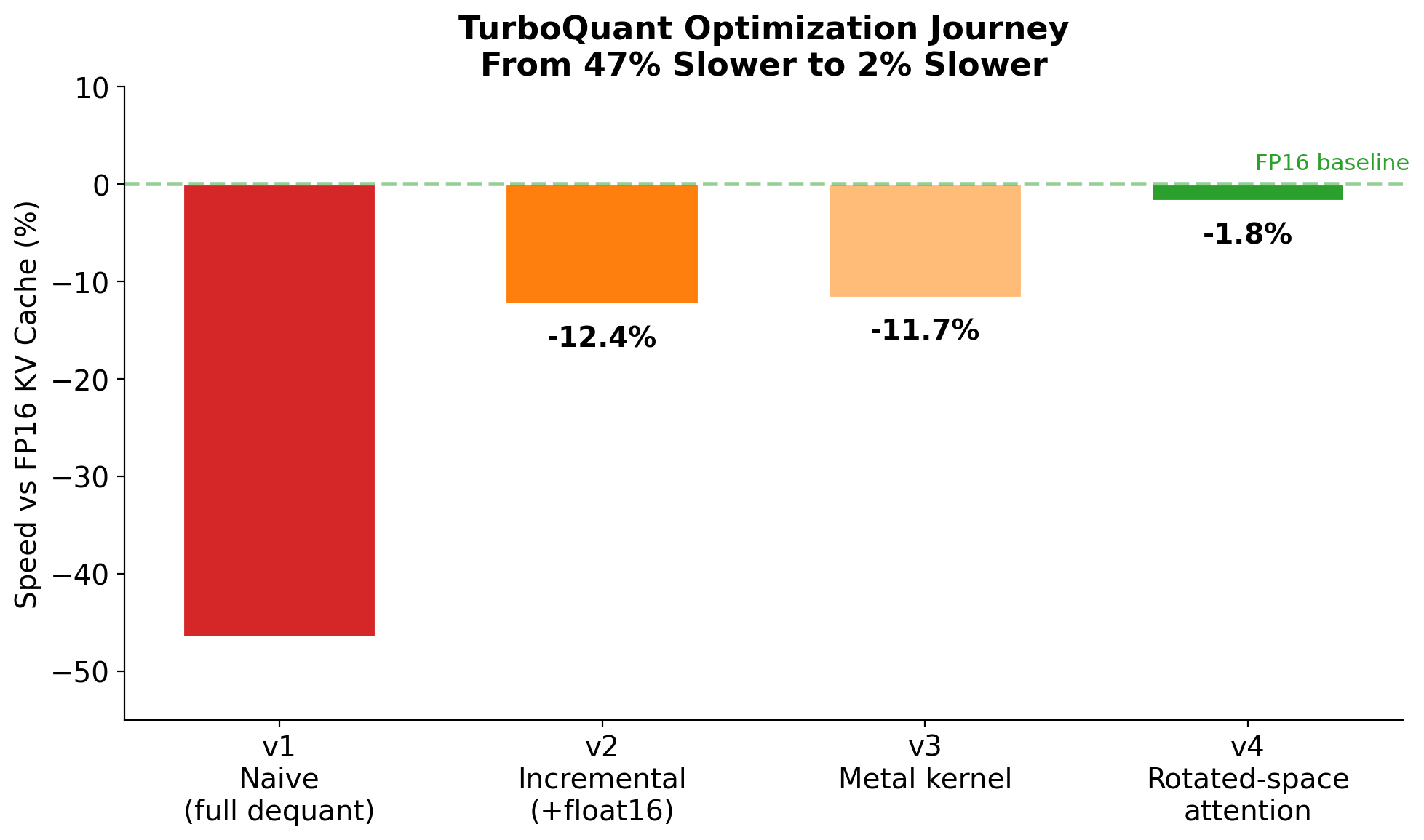

Optimization journey

From 47% slower to 2% slower through incremental dequantization, custom Metal kernels, and rotated-space attention.

Status: TurboQuant is a library primitive you can import directly; the current

optiq serve command uses mlx_lm.models.cache.QuantizedKVCache (affine) for the mixed-precision serving path since it integrates with mx.quantized_matmul's fused kernel. A fused TurboQuant serve path is on the roadmap.

TurboQuant KV (library)Python

from optiq.core.turbo_kv_cache import ( TurboQuantKVCache, patch_attention ) patch_attention() cache = model.make_cache() for i, layer in enumerate(model.layers): if hasattr(layer, "self_attn"): cache[i] = TurboQuantKVCache( head_dim=layer.self_attn.head_dim, bits=4, seed=42+i )

Thread 4

Sensitivity-aware LoRA

Standard LoRA uses uniform rank across every adapted layer. OptIQ already knows which layers are sensitive — they got more bits during quantization.

optiq lora train reuses that signal, assigning higher adapter rank to sensitive layers and lower rank to robust ones, at the same base budget.Sensitivity-aware LoRA training

Readoptiq_metadata.json

→

Per-layer rankby_bits / by_kl

→

Trainmlx-lm trainer + patches

→

SavePEFT + OptIQ sidecar

How rank scaling works: with

--rank-scaling by_bits, a 4-bit layer gets the base rank (say 8), an 8-bit layer gets 2× that (rank 16), at roughly the same total parameter budget. Layers OptIQ identified as sensitive — the same ones it preserved at 8-bit during quantization — now also get more adapter capacity when you fine-tune. Adapter output is PEFT-compatible (adapter_config.json + adapters.safetensors) plus an OptIQ sidecar (optiq_lora_config.json) that records the per-layer rank distribution.

Train + serve with a LoRA adapterbash

# Train with by_bits scaling (default) $ optiq lora train mlx-community/Qwen3.5-9B-OptiQ-4bit \ --data ./my_training_data \ --rank 8 --rank-scaling by_bits \ --iters 1000 -o ./my_adapter # Show the per-layer rank distribution $ optiq lora info ./my_adapter # Serve with the trained adapter applied at startup $ optiq serve \ --model mlx-community/Qwen3.5-9B-OptiQ-4bit \ --adapter ./my_adapter

Thread 5

Mounted hot-swap adapters

mlx_lm's stock load_adapters merges adapter weights into the base model — fast but irreversible without a full reload. OptIQ ships a reversible mounted alternative that keeps adapter weights separate and gates them via a ContextVar the server flips per request.Per-request adapter routing

Base modelloaded once

→

MountedLoRAdict of adapters

→

ContextVaractive adapter id

→

Forwardresidual if active

Memory math: one OptIQ 9B-4bit base is ~5 GB. Each mounted LoRA adapter is ~50 MB. 10 adapters co-resident ≈ 5.5 GB, vs ~50 GB if you spun up one full model copy per adapter. Switching between adapters in the same Python process is free — no weight reload, no GPU re-upload. ContextVar semantics mean concurrent asyncio tasks / threads with different active adapters don't step on each other.

Mounted LoRA APIPython

from optiq.adapters.mount import ( mount_adapter_on_model, AdapterActivation, ) # Mount N adapters on one base, stays resident mount_adapter_on_model(model, "agent-A", "./adapter_a") mount_adapter_on_model(model, "agent-B", "./adapter_b") # Per-request: flip the ContextVar, generate, done with AdapterActivation("agent-A"): out_a = generate(model, tok, prompt=p, max_tokens=50) with AdapterActivation("agent-B"): out_b = generate(model, tok, prompt=p, max_tokens=50)